Modern lense tend to be large and expensive, with multiple glass elements combing to minimise optical aberrations. But what if we could just use a cheap single-element lens, and remove those aberrations computationally instead? This is the question scientists at the University of British Columbia and University of Siegen are asking, and they've come up with a way of improving images from a simple single element lens that gives pretty impressive results.

The method is described in detail in the researcher's paper. It works by understanding the len's point spread function' - the way point light sources are blurred by the optics - and how this changes across the frame. Knowing this, in principle it's possible to analyse an image from a simple lens reconstruct how it should look, through a computational process known as 'deconvolution.'

This isn't a new idea, but the team of researchers claim to have made some key advances in the field, making their method more robust than those previously suggested. For example chromatic aberration means that simple lenses can give detailed information in one colour channel with significant blur in the others, so they've decided to use cross-channel information to reconstruct the finest detail possible.

One serious problem with deconvolution approaches is that they often struggle to reach a single 'best' solution. The group claims to have solved this by optimising each colour channel in turn, rather than trying to deal with them all simultaneously.

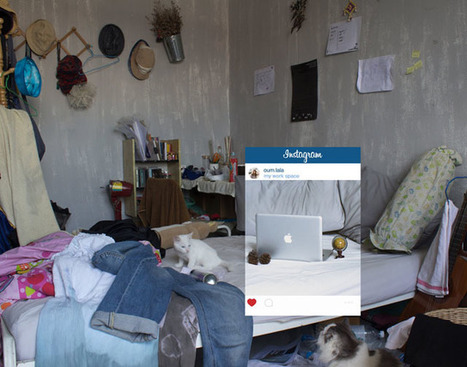

This is all very clever, of course, but does it work? The group shows several before and after examples on its website, shot using a simple F4.5 plano-convex lens on a Canon EOS 40D, and the results are quite impressive.

Your new post is loading...

Your new post is loading...

Simple lens, we come across them from time to time. But to transform those simple lens into a way where the computer can correct the image to look, better. It's basic stand point is figuring out what point of light is being blurred by the optics and which color of light is being framed.